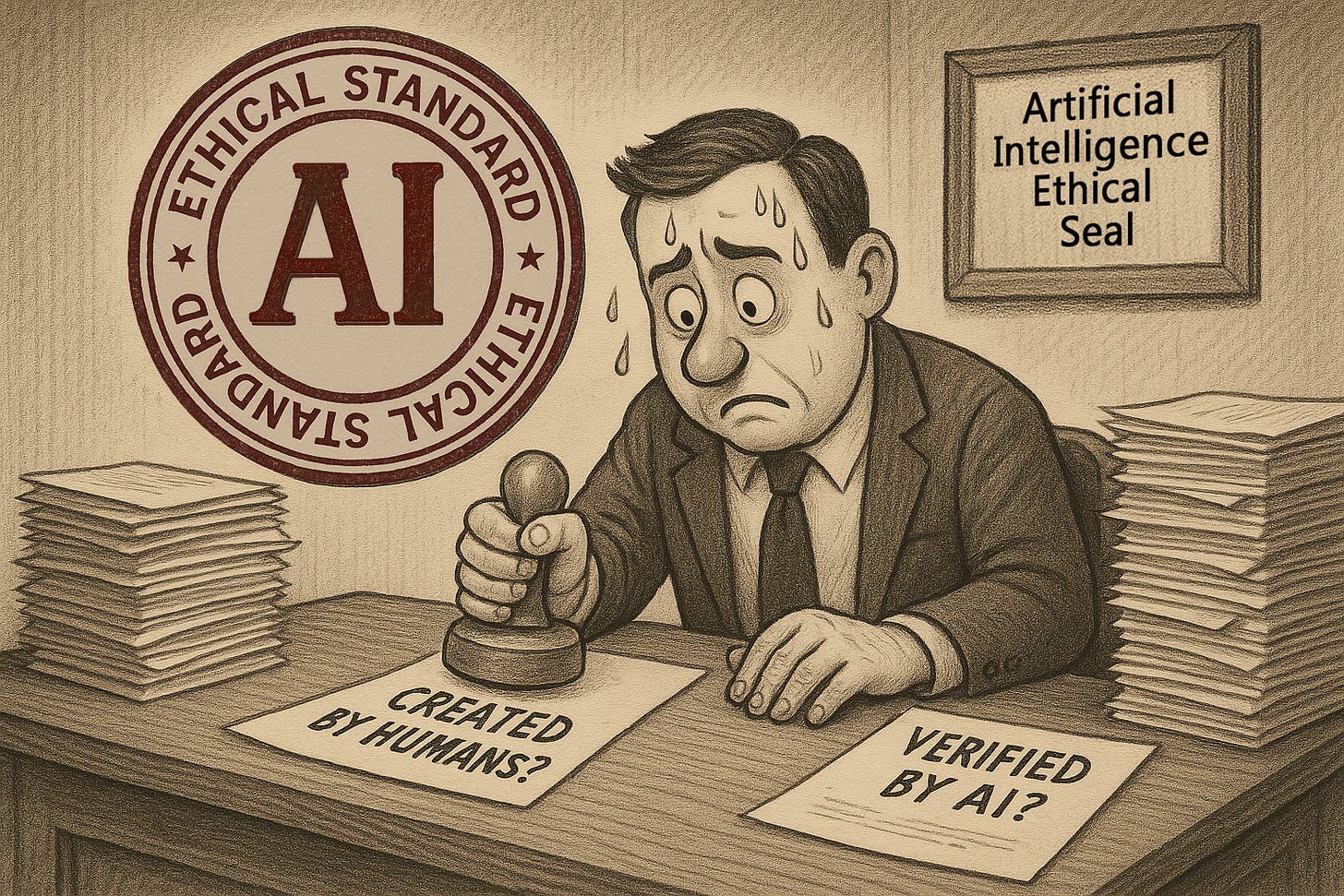

AI’s Ethical Standard Assessment is a Must

Recognizing the elephant in the room will be the toughest obstacle.

Puedes leer y compartir este Artículo en Español <LINK>

There’s no avoiding it anymore: we urgently need an AI Ethical Standard (AIES), a framework that identifies how artificial intelligence was used in the creation of any human output – whether intellectual, creative, strategic, or operational – and to what extent it influenced the process, the structure, or the final result. An to be clear, it cannot and will not be a disclaimer, because at all times, we humans are accountable.

AIES is simply a transparent record of how much AI influenced the creation of a work – nothing more and nothing less.

It will be most fitting to start in one discipline and then expand to others; I propose starting with language outputs, since that is what sprung AI’s LLM innovation. Therefore, we need to recognize how much work came into existence due to AI’s intervention. I do agree, however, some important considerations need to be considered.

There are two main fronts: implementation feasibility and ethical-philosophical resistance. The first is more than reasonable that it needs to be addressed by a collegiate format and is part of a second phase after the ethical-philosophical resistance is addressed and is set in the public and square discussion we need to have.

Addressing the elephant in the room

The lack of integrity in today’s world is nothing less than the greatest and most disruptive force of our time – whether economically, politically, culturally, or socially and most particularly personal. We have grown so callous to our lack of integrity that is an undertow current underneath all disruptions throughout society and human’s endeavors. When we criticize politicians or corporations for their lack of ethical standards or immoral behavior, ‘we all fail to recognize the plank in our own eye and only see the speck of sawdust in theirs’ (Matthew 7:3–5). This calloused ethical reality is the result of our lifelong, seemingly insignificant and small dishonest misdemeanors in everyday life.

It has a lot to do with our human nature and history, the reason why I address that issue in my articles so much – to see if we can assets “the elephant in the room” by describing it from different perspectives.

So, given our difficulty in recognizing how compromised our ethical stance already is – in every human endeavor, whether social, corporate, political, or personal – proposing a standardized seal that identifies AI’s involvement in every text output may seem like an impossible goal.

However, I set before you that all the alternatives are much worse. If integrity is already fragile, the amplification power of AI will make dishonesty scale faster than ever before.

The Futility Argument

“No one will be honest about it.” You may think just that, but let’s consider it. We have laws to draw the line that restrains the overflow of our uncivilized tendencies as individuals or organizations: tax evasion, corruption, even traffic rules. These laws don’t eliminate wrongdoing; they define accountability and provide recourse when boundaries are crossed.

Likewise, with AI we can’t contain its use, but we can define responsibility. Establishing how much AI was involved in human creation is the only way to preserve the line between human accountability and machine assistance.

The Freedom & Creativity Argument

Will this kind of standard stifle innovation or blur creative autonomy? I wrestle with that question myself as I write these lines. But the truth is, humans remain the only creative force – the intelligence and the drive behind any machine’s output. To judge a creator for using new tools is as absurd as dismissing an airbrush artist because a compressor helped shape the work.

The real issue is not whether we use machines, but where augmentation ends and deception begins. There is a vast difference between using AI to challenge our thinking and strengthen our structure, and letting it replace the very agency that makes the work ours.

The Owner Paradox

Do people really care or do we all just want results? If AI can solve social problems, increase food production, or help cure incurable diseases, do we really want to ask how we got there?

But then, what happens to credit and accountability? Will a scientist still receive a Nobel Prize if the breakthrough was co-authored by an algorithm? Will companies keep the royalties for products developed with AI assistance, when taxpayers and public institutions are footing much of the bill through research grants and infrastructure?

How much AI was truly involved may be impossible to determine – that is precisely why we must start asking these difficult questions now. Recognizing the ethical boundaries of AI is not a technical task; it is a civilizational one.

The Philosophical Question

I know this may seem the least relevant, but is it? What truly makes us human is our capacity to reason, create, and relate to our work and others in the way we choose – and one of the highest forms of it is spiritual and philosophical.

Are we virtual signaling when using AI for output work? Will our authorship mean the same as it used to? There is no question that AI can be a turbocharge of our intentions, therefore knowing what our intentions are is crucial to the whole human endeavor that includes AI in the process – and setting a boundary on how much of it was involved in our output, it’s of unavoidable consequence for our future.

The big questions are no longer optional. An AI Ethical Standard (AIES) is a boundary we unavoidably need to set, we need to wrestle with these questions before we entangle our world more with our lack of integrity.

We will continue digging deeper. Boundaries have always served a double purpose: to keep us from stepping into chaos, and to show us the chaos already within us – an invaluable asset in today’s world.