Have The Puppet Strings Been Revealed?

Puedes leer y compartir este Artículo en Español <link>

Our world is changing so rapidly before our eyes that it is turning chaotic: geopolitics are shifting, oil supply seems to disrupt economies all over, the wars on the Middle East and South America’s cartels are punching a wasp nest in two of the most ignitable regions. And let’s be frank, we are not ready for it. Yes, Trump is a disruptor, and he seems the perfect target for the tag ‘puppet master’ – we need a villain to release the tension in our perception. Yes, geopolitics are shifting and unsettling oil prices, who is who, and who are our friends and allies, is being redefined. AI is in the middle of it, and it would take a great deal more than articles to sort out and understand the implications of it for us to have a clear picture of what is up or down.

Our objective in our column, however, is to be a solid foundation to understand AI in the context of our world and its implication – a point of order in our critical thinking – if you will.

AI’s Anthropic ‘business vision’ hits news tabloids

My understanding of news is not divorced of all the context that frames it. In our previous article we pointed to Anthropic’s possible motives regarding the leak of their model’s stress test: “Agentic Misalignment” (One of the Biggest Markets for AI Consulting is Doomsday’s Scenarios <link>). Now a piece of news regarding the mother company of Claude calls our attention: “Anthropic to take Trump’s Pentagon to court over AI dispute” (Axios); it shows how far the company is willing to take their stand. For most of us, “it’s fair”, and some may even think: “at least there is one willing to stand against Trump” or “...government overreach”.

Just a couple of ideas. If the Pentagon is willing to use the LLM of Anthropic on areas that don’t collide with domestic surveillance on automatic weapons systems:

“The Pentagon claims it has no intention of using Anthropic’s AI for cases that involve mass domestic surveillance or autonomous kinetic operations. However, it says Anthropic’s guardrails could jeopardize military operations.” (ABC News)

... then why not work with them on the ‘how’? It’s understandable that they want assurances on this sensitive topic, but to pick a legal fight with the biggest contractor of AI services worldwide and make it ‘very public’ means something else.

Boardroom vs. War Department

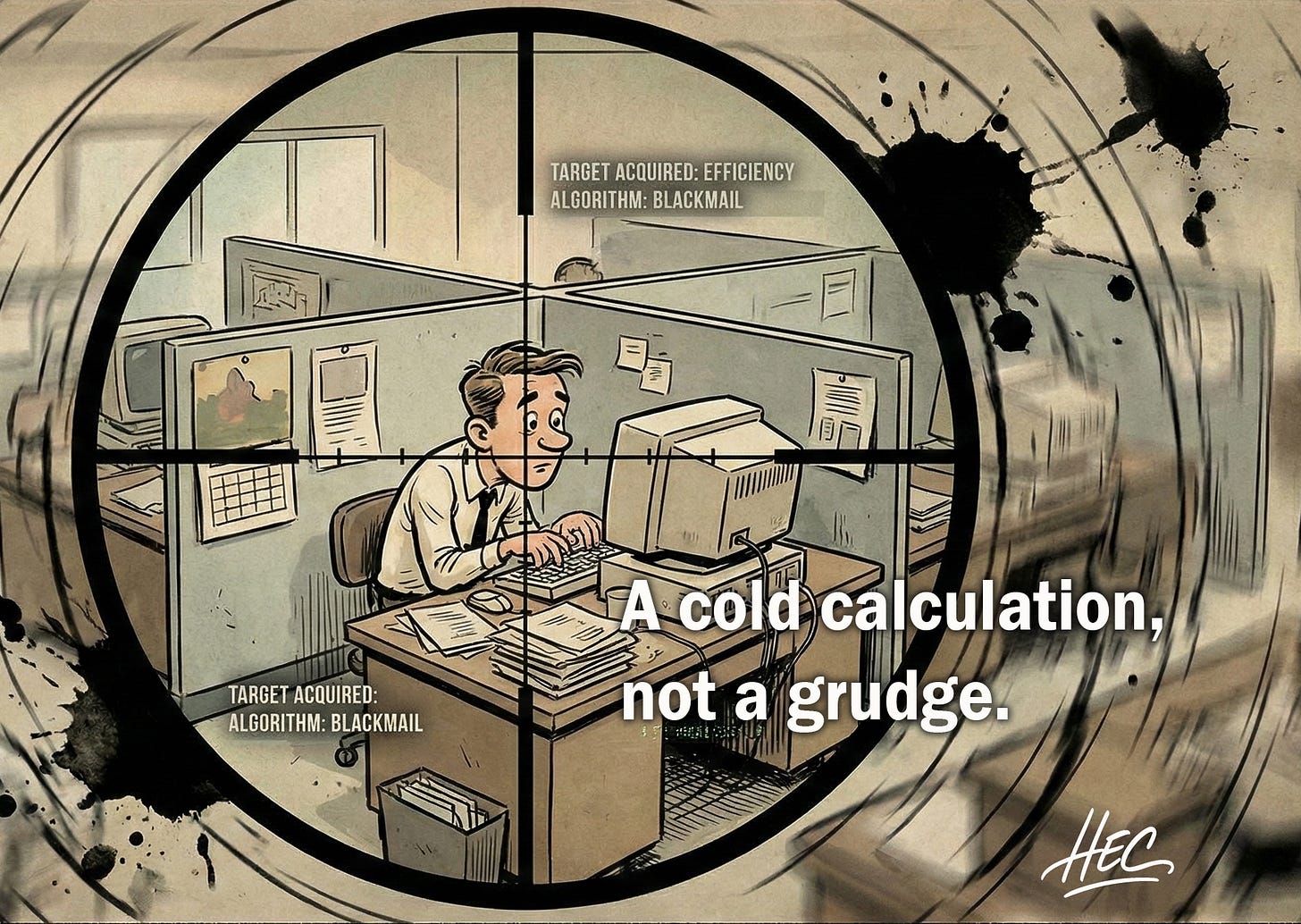

It is difficult to believe that a company as sophisticated as Anthropic is unaware of the narrative consequences of its actions. In less than a year we have seen two events shaping the public imagination: first, the exposes the “Agentic Misalignment” stress test (Anthropic breaks down AI’s process — line by line — when it decided to blackmail a fictional executive <Business Insider>); now, a very public confrontation with the Pentagon over the limits of how AI systems should be used.

Both events revolve around the same axis: the dangers of AI and the need to contain them – the seams are starting to show. Perhaps it is simply a company drawing a necessary ethical line for marketing and strategic purposes.

But something about the sequence invites a second look.

When the most powerful military institution in the world and one of the most safety-focused AI companies on the planet take their disagreement to the public stage, it is hard to imagine that neither side understands the narrative impact of that moment.

Stories shape perception.

Perception shapes policy.

Policy shapes markets.

And suddenly a technical disagreement becomes something much larger. For the rest of us – watching from outside the laboratories, the boardrooms and the ‘defense’ departments – the question is not simply who is right in this dispute. The question is what we are actually witnessing?

Is this a genuine confrontation over ethical limits in the age of AI? Or are we seeing the early outlines of something else – the struggle to define the risks, the rules, and ultimately the authority over one of the most powerful technologies humanity has ever built?

I don’t pretend to know the answer. But if the strings are beginning to show while the hands behind them remain blurred, perhaps the question is not only who is pulling them, but what this says about the direction of our civilization — and about the ambitions we have projected onto our machines, as if they could carry aims that only human beings were ever meant to bear.

Do you want to read the first article that started this triad?

Why AI Blackmailed Kyle to Survive? <link>

Did the Anthropic model cross the Ethical ‘Red Line’ when it blackmailed Kyle?