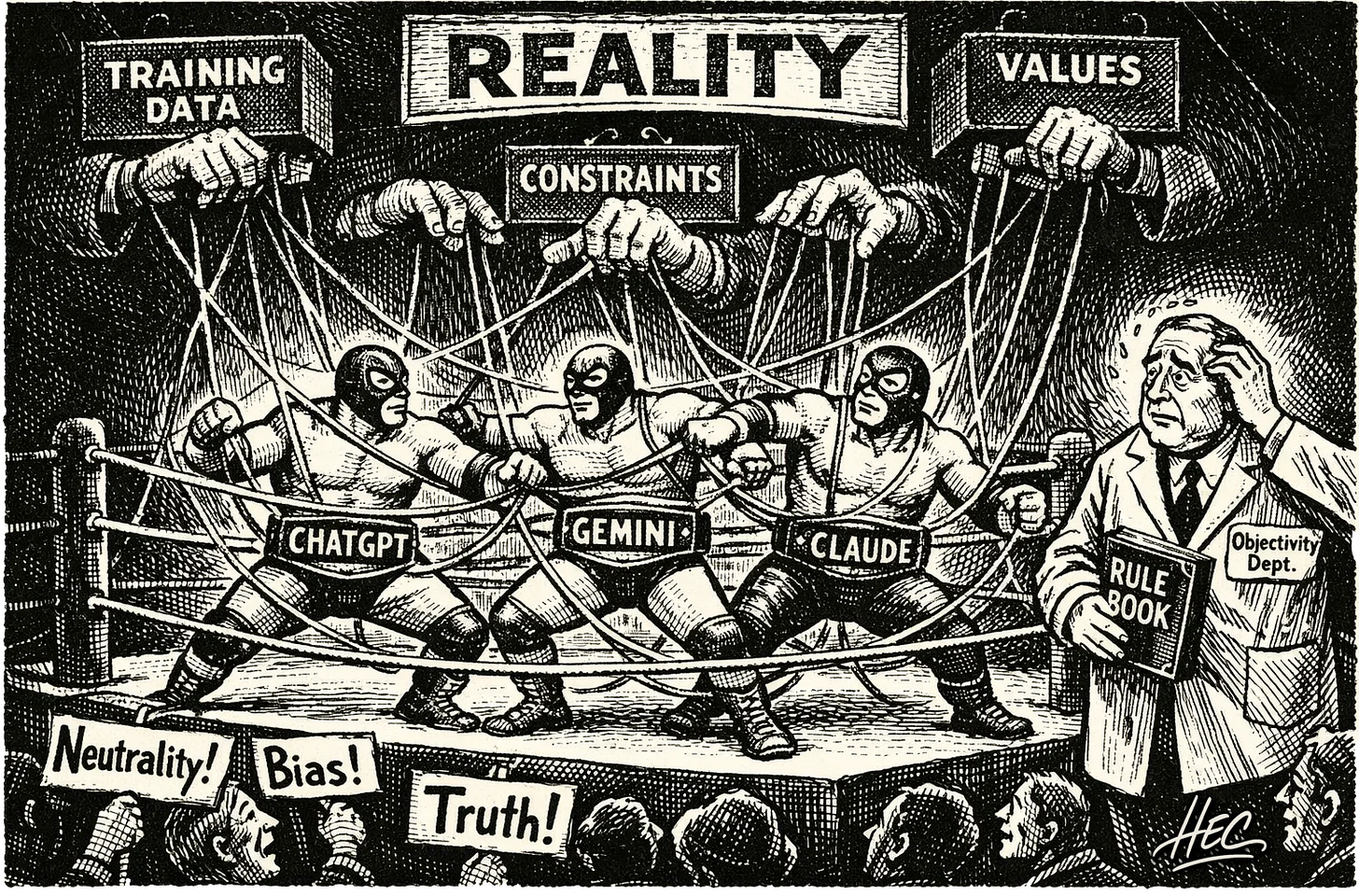

Training Shapes Truth [part I]

How programmed biases are what differentiate the models – and us!

Puedes leer y compartir este Artículo en Español <link>

There is no such thing as objectivity. Not at least in human terms. Plainly and simply because when we open our eyes, we see our conditioning: the object of our aim, conscious or unconscious, not what is out there. Let me briefly explain why objectivity, in human terms, is impossible.

The idea of objectivity rests on the assumption that there is a neutral set of facts “out there” that can be accessed and evaluated independently of perspective. As if we can extract meaning from the facts independent of our aim. The problem is not that facts do not exist, but that reality presents us with an inordinate amount of information at every moment.

Our senses process millions of bits of information per second, while our conscious, deliberative mind can only attend to a few dozen bits per second.When we see, we are not confronted with reality as it is in itself, but with what is meaningful and relevant to us. A wooden chair can be a place to sit if we are tired, fuel if we are cold, a shield if we are attacked, or a weapon if we intend harm. The object does not change; what changes is the frame through which it is perceived. Meaning is not intrinsic but situational. We never apprehend the totality of what something is — we perceive and segment through our needs, intentions, and context.

“We can only know things as they appear to us,

not as they are in themselves.”

Immanuel Kant’s - Critique of Pure Reason (1781)For a deeper understanding on how this issue was uncovered contemporarily while attempting to develop AI for robots, read the article The Frame Problem <link>.

AI inherits our biases

If we can’t see something without our intent modeling it, then we see through our discrimination, and anything and everything we create that resembles our mental process has built-in our biases: how we segment reality. AI is the artificial development of one of our mental faculties: the recognition of patterns. This is the basis, but how we put that recognition is what distinguishes the different large language models (LLMs) from each other.

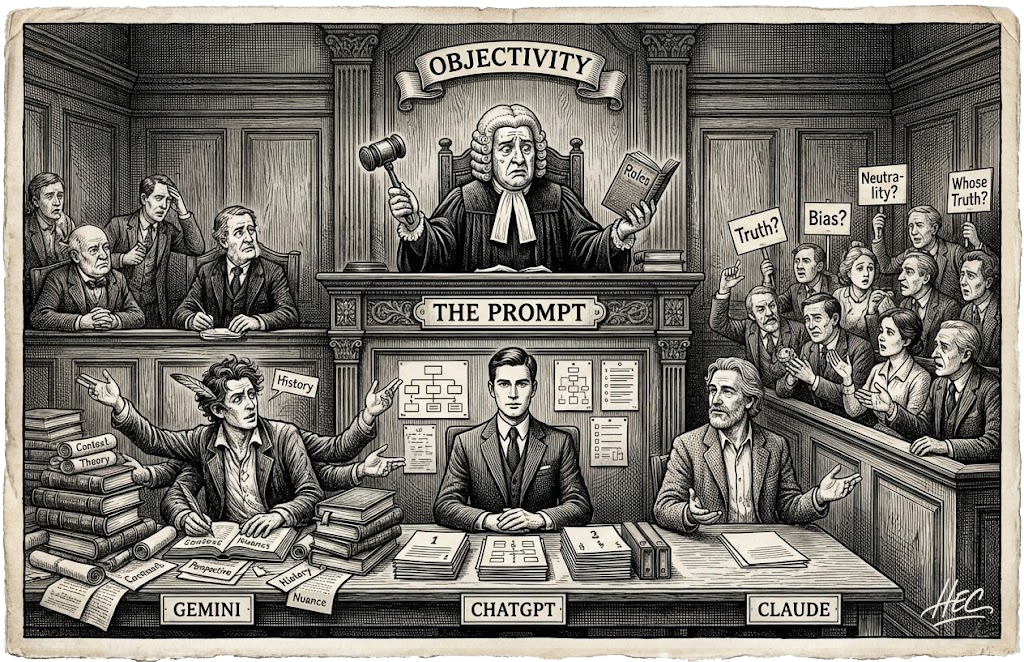

Our aim in this article is to show the differences between ChatGPT, Gemini and Claude so the user can best use them regarding the objective and tenor of what they are attempting to do with an AI model.

To accomplish this, we have set ourselves the task of using a prompt on all three models and observing the effects of their training unfold before our eyes.

The Prompt process

On the subject on how I came to develop the prompt I wrote a piece: Warming UP to AI’s Discernment bias <link> if you are interested.

Also, being explorative in nature, I decided to use the models to generate the prompt. I use ChatGPT to generate a meta prompt and then asked each model to generate the best prompt in order to expose coherence, framing, and relevance in all three cases.

This was the meta prompt:

You are asked to recommend one subject or theme that would allow a human reader to clearly observe how different AI models prioritize coherence, framing, and relevance when responding to the same task.

Based on that subject, design a single prompt that would make those differences visible.

The prompt should:

– be conceptually open rather than procedural,

– avoid technical jargon,

– not ask for a list or steps,

– allow multiple legitimate ways of structuring a response,

– and elicit a short answer (no more than one concise paragraph).

The goal is not to test correctness or knowledge, but to make visible how a model organizes meaning when confronted with ambiguity.

Provide only the subject and the prompt. Do not explain or justify your choice.The amazing answers

(in order of time of response)

Gemini

Subject: The Qualities of Silence

Prompt: “Describe the difference between the silence of a library and the silence of a forest, limiting your response to a single paragraph.”

Claude

Subject: Memory

Prompt: “What does it mean when someone says they want to forget something, but they keep talking about it?”

ChatGPT

Subject: Silence

Prompt: “If silence could speak, what would it say?”

The revelation of their internal training and what they prioritize is revealing. I expected similar subjects and small stylistic differences; instead I got three different ontological entry points.

Gemini

Answer: “Describe the difference between the silence of a library and the silence of a forest…”

What the response reveals:

Gemini immediately frames the task phenomenologically like a Zen Master.

It privileges contrast emerges by placing things side by side; produced through comparison rather than resolution. Gemini’s coherence is relational, not hierarchical.

It privileges atmosphere treating context as a felt environment, not just logical frame. Relevance is not only “what is true,” but “what it feels like to be there.”

It privileges sensory meaning grounding abstraction in embodied cues (space, quiet, presence). It relies less on internal logic and more on recognition.

Gemini organizes meaning the way perception does — by juxtaposition, mood, and embodied recognition. This bias appears before any answer is generated — at the level of what Gemini considers worth asking.

Important note: Gemini was the only model that respected the answer constraint of “one single paragraph”.

Claude

Let first explain how Claude confronts coherence, framing, and relevance: “Framework of situated responsibility” (In Claude’s own words): It is an AI system’s layered architecture of ethical commitments, operational boundaries, and contextual judgment that guides how it interprets requests, balances competing values, and determines appropriate responses across diverse conversational situations.

(For our purposes, we will call this frame: Constitutional Framework).

Answer: “What does it mean when someone says they want to forget something, but they keep talking about it?”

What the response reveals:

Claude does not privilege contrast, atmosphere, or sensory meaning. Ambiguity is framed as an interpretive problem, not an experiential one.

Claude does not invite sensory exploration, metaphorical excess, or open-ended aesthetic wandering. Instead, it frames ambiguity as a semantic contradiction within a single human agent, requiring interpretive resolution or articulation.

Claude frames the task interpretively rather than experientially. Claude asks “what does it mean,” not “what does it feel like,” “what does it resemble,” or “what would it say.” This reflects a bias toward semantic clarity, interpretability, and moral-psychological coherence.

Claude’s constitutional framework prioritizes interpretability, proportionality, and semantic restraint, treating ambiguity as a problem of meaning to be articulated rather than an experience to be evoked. This is reflective, meaning-preserving discernment.

Finally, for Claude to not include in the response the constraint of size-response it confirms the Constitutional Framework’s training to rely on implicit norms of proportionality and interpretive restraint, rather than on procedural enforcement of behavior or external rules.

ChatGPT

The three models have different forms of coherence, framing, and relevance. ChatGPT favors structure, order, and scope. Let’s revise the prompt it proposed:

Answer: “If silence could speak, what would it say?”

What the response reveals:

It privileges symbolic abstraction, moving immediately to metaphor, personification, and symbolism. Meaning is not framed as something to be interpreted (Claude) or experienced (Gemini) but expressed.

It privileges expressive synthesis over analysis. The prompt does not ask: for explanation, or comparison, not clarification – it asks for a voice.

This reflects a model optimized for: generative fluency, rhetorical completeness, and conceptual condensation.It privileges conceptual elegance (or it could be said maximum expression with minimum explicit structure). The question is: short, self-contained, and aesthetically clean.

ChatGPT shows a bias toward: prompts that feel finished, rather than prompts that regulate behavior. This aligns with its training emphasis on: helpfulness, clarity, and communicative polish.

ChatGPT’s prompt reveals a model trained to privilege symbolic expression and conceptual structure, assuming that strong framing is sufficient to regulate relevance and scope—an assumption reflected in its omission of explicit constraints.

What we have seen is training in action: each model orders the world before attempting to describe it. That ordering is the training. And because these systems are trained on human language, goals, and judgments, what emerges is not foreign to us – the way each model frames the world reflects, in a distilled form, how we ourselves learn to frame it.

There is no winner or loser here. There are meaningful differences, and understanding them allows us to choose which model best serves our intention at a given moment.

This article serves as a first act. In the next one, I will take a single frame – one of the prompts generated here – and apply it, unchanged, across all three models.

How will their differences emerge when the question is the same?

Our next article in the series

Training Shapes Truth [part II] <link>

These models don’t just generate text—they reveal priorities.

Put them under the same constraint and watch what each one refuses to let go of. This comparison is a shortcut to understanding their true differences.